of Nvidia next-generation artificial intelligence GPU, called Blackwell, will cost between $30,000 and $40,000 apiece, CEO Jensen Huang told CNBC’s Jim Cramer on Tuesday’s “Squawk on the Street.”

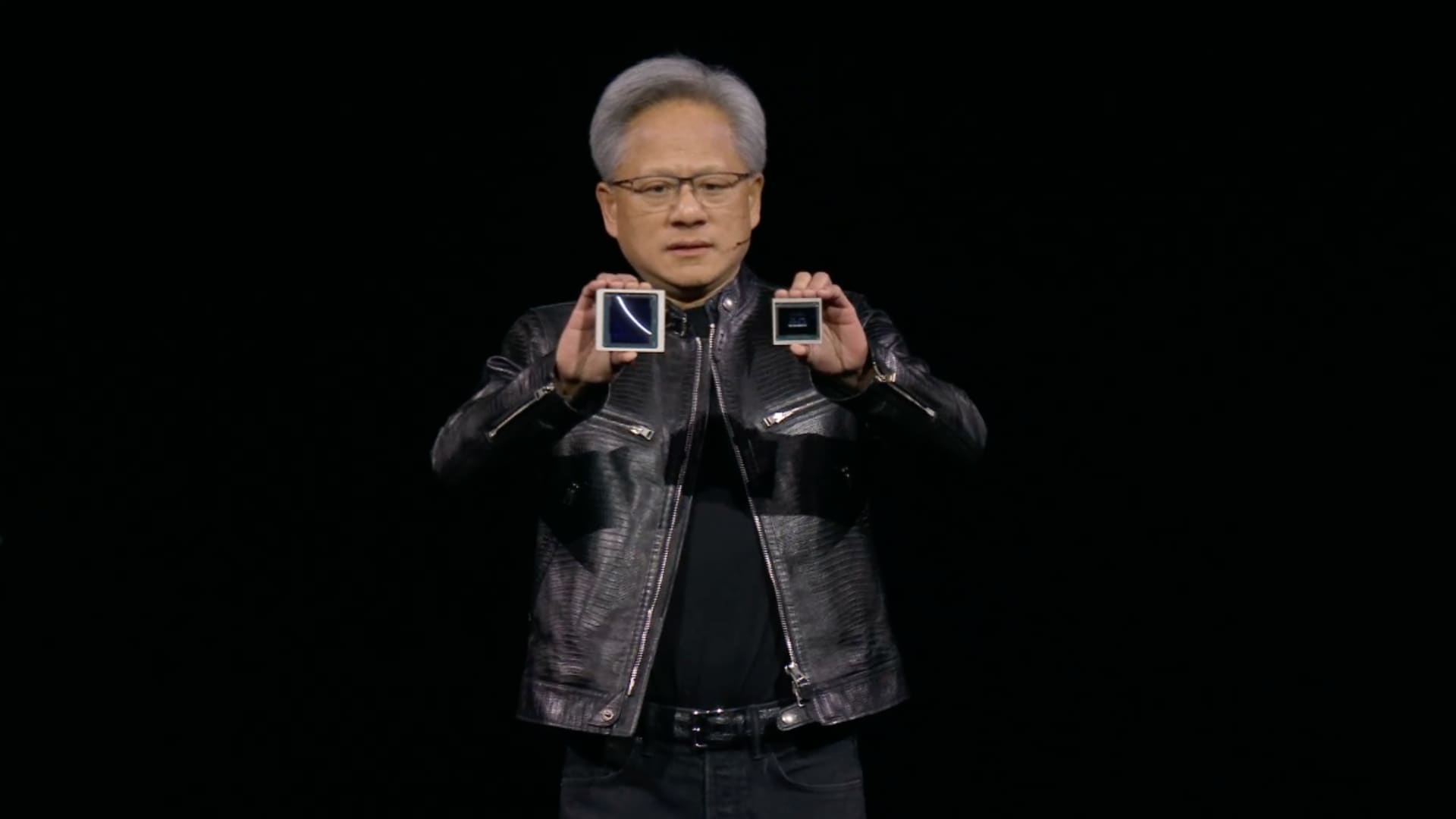

“We had to invent some new technology to make it possible,” Huang said, holding up a Blackwell chip. He estimated that Nvidia spent about $10 billion in research and development costs.

The price suggests the chip, which is likely to be in high demand for training and implementing AI software like ChatGPT, will be priced similarly to its predecessor, the H100, known as the Hopper, which costs between $25,000 and $40,000 per chip , according to analysts’ estimates. The Hopper generation, introduced in 2022, represents a significant price increase for Nvidia’s AI chips over the previous generation.

Huang later told CNBC’s Cristina Parcinevelos that the price was not just for the chip, but also for the data center design and integration into other companies’ data centers.

Nvidia announces a new generation of AI chips every two years. The latter, like Blackwell, tend to be faster and more energy efficient, and Nvidia is using the publicity surrounding a new generation to garner orders for new GPUs. The Blackwell combines two chips and is physically larger than the previous generation.

Nvidia’s AI chips have tripled Nvidia’s quarterly sales since the start of the AI boom in late 2022, when OpenAI’s ChatGPT was announced. Most of the leading AI companies and developers have used Nvidia’s H100 to train their AI models over the past year. For example, Meta is buying hundreds of thousands of Nvidia H100 GPUs, it said this year.

Nvidia does not disclose list price for its chips. They come in several different configurations and the price is end user like Meta or Microsoft may pay depends on factors such as the volume of chips purchased or whether the customer buys the chips from Nvidia directly through a complete system or through a supplier such as Dell, HP or Supermicro, which builds AI servers. Some servers are built with up to eight AI GPUs.

On Monday, Nvidia announced at least three different versions of the Blackwell AI accelerator – the B100, B200 and GB200, which combines two Blackwell GPUs with an Arm-based processor. They have slightly different memory configurations and are expected to ship later this year.

https://www.cnbc.com/2024/03/19/nvidias-blackwell-ai-chip-will-cost-more-than-30000-ceo-says.html